= ASTRONAUTICAL EVOLUTION =

Issue 151, 18 November 2019 – 50th Apollo Anniversary Year

| Site home | Chronological index | Subject index | About AE |

Read this post on Wordpress if you wish to leave a comment

The Destiny of Civilisations – Fire, Iron and Gold

Civilisation enabled or destroyed by technology?

To continue our train of thought from the previous post: I have seen a similar distinction between dynamic and static models of society in another context, though here they might better be called technocratic versus technophobic.

The natural mindset of anybody who is into technology is to assume that a successful human future must see increasingly powerful technologies coming into use, until humanity merges with its machines. In such a future, our descendants are free to roam the galaxy.

But what of the technophobic mindset, where science and technology are seen as dehumanising and spiritual values of love, religious belief and living in the moment are more important than – and are threatened by – material progress?

Tolkien’s and Lewis’s fictional worlds

One Ring to rule them all, One Ring to find them,

One Ring to bring them all, and in the darkness bind them.

(The inscription on Sauron’s golden ring of power, according to J.R.R. Tolkien, The Fellowship of the Ring, book 1, ch.2.)

A simplistic reading of J.R.R. Tolkien and of his friend and fellow Christian C.S. Lewis might well suggest to the reader that these writers were hostile to technology.

In The Lord of the Rings, Sauron’s ring is both the ultimate technological achievement and the most evil artefact ever forged. It cannot be used for good, but can only be destroyed before it enslaves everybody under the dictatorship of the Dark Lord.

Saruman’s fortress at Isengard is portrayed as a hotbed of dehumanising industrial activity, and is of course overthrown by the protagonists.

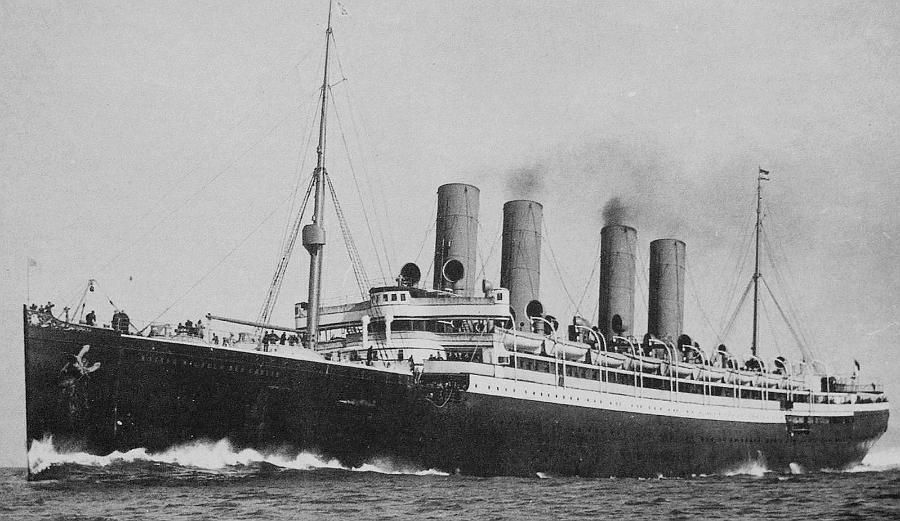

In Lewis’s novel The Voyage of the Dawn Treader, part of his history of Narnia, Eustace compares Caspian’s one-masted sailing ship unfavourably with ships on Earth, comparing it with the Queen Mary and pointing out that the Dawn Treader was not much bigger than a lifeboat (diary entry in ch.2). But Lewis’s heart is clearly with the sailing ships of Narnia, and he never allows Narnia (or its southern rival, the evil empire of Calormen) to industrialise.

His anti-technological stance is more explicit in his interplanetary trilogy, where space travel as we understand it today is portrayed as evil, and the evil in the third volume, That Hideous Strength, is a product of perverted science. Lewis is quite clear (in his reply to J.B.S. Haldane’s review of this novel) that he is not opposed to science as such, but that he thinks it is vulnerable to evil tendencies which would use its popularity and effectiveness to insinuate the corruption of mankind and its subjection to the dictatorship of devilish beings.

Although these authors have little interest in talking about technology and industrialisation as such, it is clear that their attitude is highly critical. Of course, a writer such as George R.R. Martin in his A Song of Ice and Fire (a.k.a. Game of Thrones) has so little interest in the topic that he ignores it altogether.

Technology for good and evil

Yet a limited level of technology is clearly indispensable to any kind of life for both human characters and those with human-equivalent intelligence – including the village of Hobbiton in the Shire and the dwarfish, faunish and talking animal settlements of Narnia.

Within this limited level we are talking of the ability to make and use fire, the mining and working of metals, construction of buildings of wood and stone, building of sailing ships, production of goods such as swords, coins, jewels, manuscript books, furniture, crockery, candles and oil lamps, fireworks, clothing, and so on.

We are clearly excluding any machine or vehicle powered by fossil fuels, printed books, gunpowder weapons, nuclear power and nuclear weapons, antibiotics, electrical appliances, electronics, radio and television, and so on. Transport is by horse (possibly winged), or indeed by eagle or dragon, as well as on foot, but never by steamship, steam railway or aircraft.

Sometimes high-tech-equivalent capabilities are made available to particular characters. The hermit in Lewis’s The Horse and his Boy has television, as does, if I recall correctly, an elf character in The Lord of the Rings. But in these instances the capability is provided by magic, not industrial technology as we know it. Magic, by its nature, requires a special individual to use it. Unlike technology, it cannot be mass produced or mass marketed (despite Arthur C. Clarke’s famous dictum to the contrary).

So in other words, the technological development in such fantasies is held at a medieval level. It can only legitimately transgress that level in the hands of someone of exceptional spiritual purity, otherwise such advanced capabilities – inevitably seized for selfish ends – become an evil motivating the plot conflict between those who would build a empire based on these higher technologies and those who reject them, with the latter as the heroes of the story who eventually triumph.

Is this dividing line artificial or real? Why should a medieval level be desirable but further development be undesirable? Can one discern any kind of development barrier here?

One writer who can is Sunday Times journalist Bryan Appleyard, who mentioned that ancient peoples did not have modern medicine or electronics, and continued:

“Science and technology have not developed gradually over the whole history of human culture; they have suddenly exploded all about us. Their sheer, profligate effectiveness is something utterly novel. […] It is central to my thesis that […] science is a fundamentally new way of knowing and doing things. I believe that an examination of scientific history makes this point obvious. I find it absurd, almost sentimental, to say, as would Bronowski, that a plough is like a CD-player. They are fundamentally different. The designer of the latter has to have a different way of knowing from the maker of the former.”

My own view agrees with Dr Jacob Bronowski: that technological development is a continuous path of progress, from its beginnings in prehistory until some plateau of maximum possible technological capability is reached at some point in our future. Once our ancestors hit on the trick of using one stone to give a sharper edge to another stone, they were launched irrevocably on a course which would lead ultimately to artificial intelligence, genetic engineering and starships – barring catastrophic accidents, of course.

Coming at the question from a very different angle, Bill Joy (cofounder and chief scientist of Sun Microsystems) wrote in Wired magazine:

“The 21st-century technologies – genetics, nanotechnology, and robotics (GNR) – are so powerful that they can spawn whole new classes of accidents and abuses. Most dangerously, for the first time, these accidents and abuses are widely within the reach of individuals or small groups. […] Thus we have the possibility not just of weapons of mass destruction but of knowledge-enabled mass destruction (KMD), this destructiveness hugely amplified by the power of self-replication. I think it is no exaggeration to say we are on the cusp of the further perfection of extreme evil, an evil whose possibility spreads well beyond that which weapons of mass destruction bequeathed to the nation-states, on to a surprising and terrible empowerment of extreme individuals.”

Here we have something very much like what has clearly been working at the backs of the minds of Tolkien and Lewis. It is a risk/benefit calculation.

The idea is that any given technology has potential benefits, and potential risks. When the benefits outweigh the risks, mankind as a whole benefits from introduction of that technology. When the risks outweigh the benefits, then on balance we suffer.

One can see that Tolkien and Lewis are observing a calculation according to which simple technologies provide a net benefit, but as the power of new technologies increases so the risks increase faster than the benefits. Thus improvements in technological level continue until a natural ceiling is encountered, in which the latest technologies to be introduced destroy humanity.

This could be by enslaving people to such an extent that their moral degeneration is complete: this is well symbolised by Sauron’s ring, representing real-world technologies such as mass production and consumerism, or mass surveillance leading to a global dictatorship (like that portrayed in Orwell’s Nineteen Eighty-Four), or genetic modification with the removal of traits open to religious ideas (as in Huxley’s Brave New World).

Alternatively the destruction could be literal physical annihilation, such as that following a global nuclear holocaust, or through more speculative manmade catastrophes such as those raised by Bill Joy: a global pandemic (deliberate or accidental) caused by an engineered virus, or the global extermination of mankind by its own intelligent robots (whether anthropomorphic, purely software, or nanoscale).

The first message from these writers is then that humanity needs the wisdom to develop technology far enough to provide a comfortable level of life, but no further.

And the second message is that, in their view, this optimum situation was arrived at in the middle ages: we have gone too far by now, and must renounce such things as nuclear, genetic and artificial intelligence technologies while we still have time, if we are not to end up destroying ourselves. Even the use of fossil fuels is now condemned by the possibility of disastrous climate change, despite the immense benefits we have gained from these fuels in the past.

Who is right?

Is it in fact the case that the kinds of technologies developed since the beginning of the industrial revolution – including 21st-century technologies of immense power such as genetics, artificial intelligence, nanotech and nuclear fusion – are so dangerous that they will in the end destroy us? Clearly, nobody yet knows.

Controlled nuclear fusion was at first seen as an imminent breakthrough in the supply of clean energy. Although steady progress has been made, it has taken much longer than expected and cost much more. Experimental reactors today still only operate at little better than break-even. Perhaps the replicating technologies that Bill Joy so feared will turn out to be just as intractable in practice?

Regarding the much-invoked need for “wisdom” in our efforts to progress, I believe that wisdom is not a product of spiritual enlightenment before the event, but rather can only be earned through long and painful experience. Whether in transport or energy supply or medicine or computerisation, disasters must always happen because we do not know in advance the safe limits which we should observe. Engineering is never an armchair activity, no matter how ambitious the computer simulations used in advance.

As the two pictures shown above suggest – of the coal-fired iron-working smithy and the coal-fired iron ship from two thousand years later – there is a continuity in science and technology from the earliest beginnings of humankind to the present and to any high-tech future which we may embark upon. To make fire by burning wood is only the first step in a progression of increasingly concentrated sources of energy: coal, oil, gas, nuclear fission and fusion, and perhaps matter-antimatter annihilation. Again, to use a symbolic spoken language leads to writing, then printing, the telegraph, radio and television, computers, and artificial intelligence. And so on. There is no natural boundary between low-tech societies and high-tech ones – though there are quantum leaps in social organisation when new ideas or products come into widespread use, which are what Appleyard, Tolkien and Lewis clearly had in mind.

What about medieval-level technologies being sufficient for a comfortable life? Why not adopt the idyllic rustic life of Hobbiton or Narnia – also dramatised in the ostensibly low-tech forest life of the Na’vi in James Cameron’s Avatar?

No, that would be an illusion. The reality would be the life shown in Martin’s Westeros: dirt, poverty, hard labour and famine for the mass of the population; disease, war, superstition and ignorance, and a liability to torture and summary execution, too, for them and for the aristocracy ruling over them. Without modern medicine, democracy, human rights, education and communications media life would not be tolerable for us – but these are products of a society needing intelligent and discerning consumers for its mass markets. Remove the modern high-tech consumer economy, and the logic of power forces us to inevitably sink back towards serfdom under a ruling class of competing warlords.

As I suggested in a recent paper on the philosophy of the starship, if humanity – or an analogous intelligent species of another star – does succeed in expanding its civilisation into space, then it will be because of a culture in which meeting the challenges of developing new technologies is seen as virtuous. One good reason for such a culture is its consciousness of the horrors of the only alternative model of society we know. Another is the fact that any successful high-tech society will dominate low-tech ones as completely as European colonists dominated indigenous peoples in the rest of the world.

Conversely, any culture which bans progress beyond the medieval level must necessarily remain confined to its planet of origin. But by the same token it will lack radio telescopes – which can only exist as the product of a scientific-industrial civilisation – and so will remain invisible to SETI searches.

A technophobic civilisation will fail to satisfy the characteristics of a static civilisation required for SETI – but so will a technocratic one, as argued in that earlier post.

The terrors of the past are well documented in history books. The terrors of the future are speculative: one possible scenario among several. For my money, virtue clearly lies in braving the future in pursuit of the continued betterment of mankind.

References

For C.S. Lewis’s reply to Professor Haldane, see Lewis, Of Other Worlds (HarperCollins, 1966), p.117-34, esp. p.123-27.

Bryan Appleyard, Understanding the Present: Science and the Soul of Modern Man (Pan Macmillan, 1992), p.4.

Bill Joy, “Why the Future Doesn’t Need Us”, Wired, April 2000.

Stephen Ashworth, “The Starship Philosophy: Its Heritage and Competitors”, JBIS, vol.67, no.11/12 (Nov./Dec. 2014), p.447-59.

To make comments, please visit this post on Wordpress.

| Site home | Chronological index | About AE |